The popularity of AI tools such as Claude Code has surged in recent months as both programmers and non-programmers explore its accessible features. For scammers, this rise in interest around AI products creates a new avenue for attack. Recently, Graphika identified scammers using Google ads to promote software that claims to install programs like Claude Code or ChatGPT. If people click these ads and follow the installation instructions, they may end up infecting their personal devices with malware that steals private information.

Key Points

- Scammers replicate Claude's and ChatGPT's branding to appear like legitimate sites.

- Scammers use verified Google Ads accounts to build an appearance of credibility.

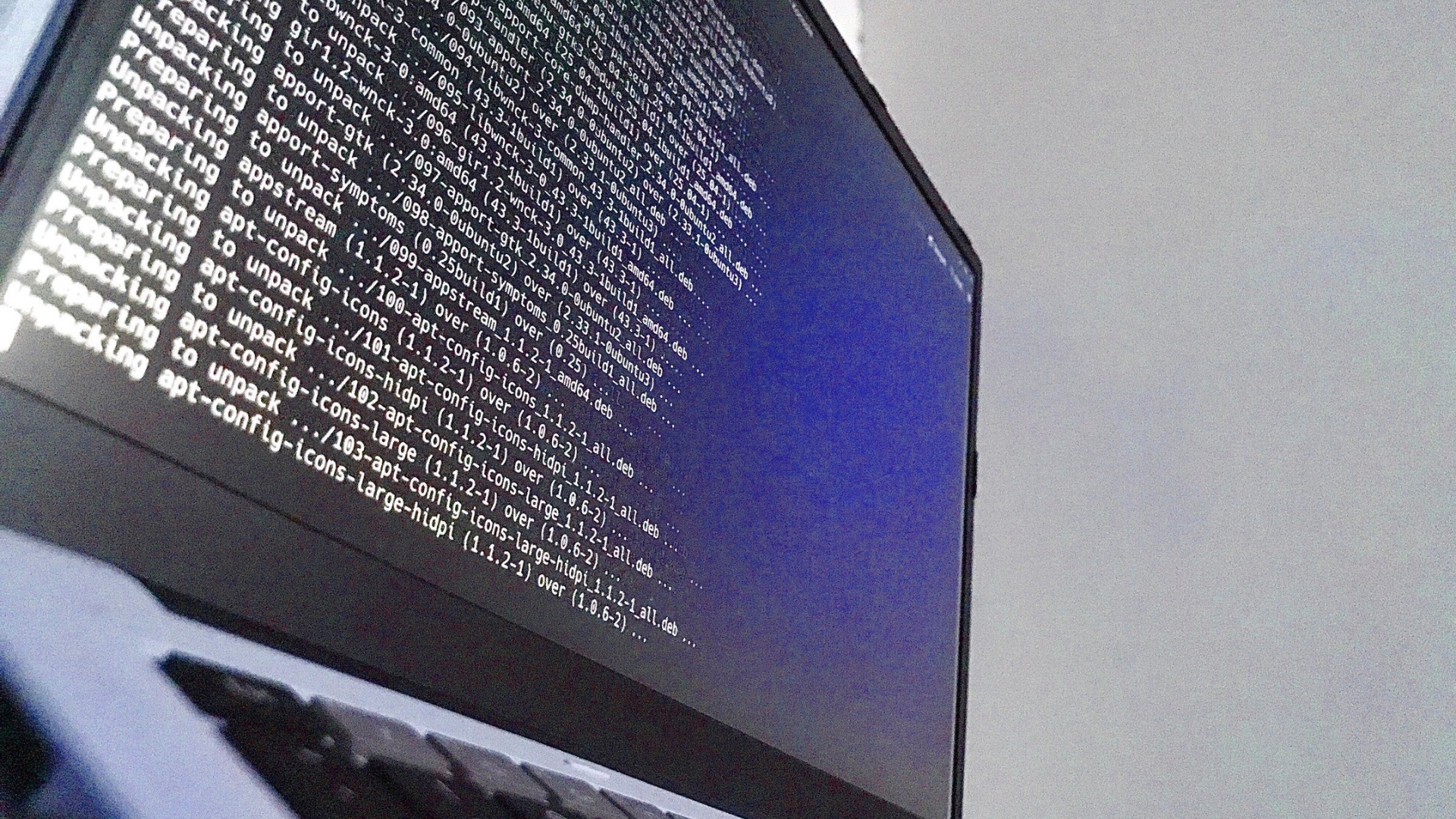

- Multiple infection methods are deployed, ranging from simple file downloads to more advanced instructions for the victim to follow, such as "curl-to-bash" commands and terminal execution.

Targeting Savvy Developer Community

In April 2026, we observed a spike in fraudulent ads targeting keywords like "get claude code" and "claude design." These ads, disguised as legitimate sponsored results on Google, led users to potentially dangerous sites, such as claude-code-macos[.]com.

Once a victim follows an ad, the pages they are directed to are hard to distinguish from the real company's pages. For instance, a site named claudesktop.gitlab[.]io closely mimics Anthropic’s official branding. It instructs visitors to run a specific PowerShell command, a snippet of code that a tech-savvy visitor would run on their Windows computer command line. Once the command is followed, malware is installed, and the device is infected.

On X, the Web3 brand-protection entity Lawyerd posted this screenshot of a website linked in a Google ad promoting inauthentic Claude code installation.

Ongoing Trend of AI-Themed Ad Fraud

This recent trend of impersonating Anthropic’s Claude Code product fits into a larger pattern we first noticed in late 2025. This scam involved a verified Google advertiser running around 400 ads for a fake “ChatGPT Atlas” browser. The playbook was similar; the landing page mimicked ChatGPT branding, instructing users to run a “curl-to-bash” command in their terminal that installs malware on their device.

In both cases, scammers are targeting the demand for AI software for their own gain.

How Do Scammers Maintain Their Facade?

Scammers often set up corporate fronts that appear legitimate but don't engage in any real business activities. For example, the Google ads for the ChatGPT application were posted by an Estonian company called CoinSun OÜ, which had been registered since 2019, but public records revealed that it had no significant revenue or employees. These scammers also prefer platforms such as GitLab to hide registration details while benefiting from the trust associated with these parent sites.

The infrastructure used by these scammers is often short-lived. Many of the domains we’ve tracked, such as last-version.install-2026.com, were registered just days before their ads appeared. This quick turnover helps them evade security filters that rely on reputation.

Managing "Malvertising" Risks for Organizations

Organizations that handle sensitive data are operating in an environment where search results cannot be taken at face value. Because these scams exploit existing ad ecosystems and mimic trusted brands, they can catch even tech-savvy users off guard when searching for productivity tools, posing a high risk.

To protect your team, it's crucial to go beyond basic blocklists. Ongoing monitoring of the ad ecosystem and the discussions around AI tools is essential to identify these threats before they lead to serious security breaches.

Stay Ahead of Malware Scams

To understand how we track emerging fraudulent narratives and scam advertising campaigns that affect your team or brand, we offer tailored threat briefings. Book a demo.